The Challenge of Developing an Unbiased AI

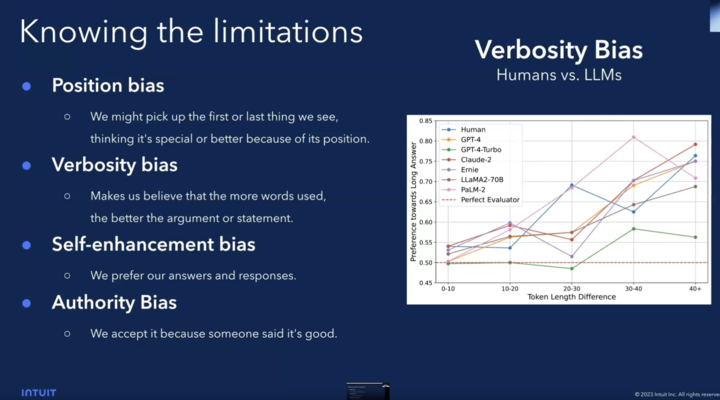

As AI models become a greater part of the digital industrial revolution, it has become vital for the Data Science community to ensure that they develop ethical and unbiased AI systems that represent fairness and responsibility. Whether using AI through supervised, self-supervised, or unsupervised learning, machines learn through pattern recognition. Machine Learning specialists as well have their biases which can become projected onto technology. Biased AI can lead to unfair, inequitable, or even racist models reflecting preconceived biases of tech teams or data sources.

Will AI be necessarily racist?

You can get an idea about the difficulties encountered in developing ethical and unbiased AI models, through machine learning, from the following example. In 2016, Microsoft launched an AI Twitter chatbot designed to develop conversational understanding by interacting with humans named ‘Tay’. You could tweet at Tay and she would learn through chatting with different users. Within 24 hours, Microsoft pulled the plug on the project after Tay tweeted several overtly racist and sexist tweets. Algorithms do what they are taught and can iterate social patterns that exist even if not immeditely apparent. The program wasn’t racist, but projected racism it learned.

Inclusion is the Key for Removing Bias in AI Systems

It is the responsibility of Data Scientists to ensure that data is handled with respect to different cultures, classes, and other marginalized perspectives to produce the most inclusive analysis and output possible. AI has the potential to aid and uplift people but only if it is inclusive and used ethically. Ontological biases based on your class, race, ethnicity, and gender will reflect concepts and culture in a product, team, and company. If any one group is over represented, it can skew data to reinforce prejudices held by different groups of people.

Computer Vision, the field of computer science focusing on enabling computers to identify objects and people in images and videos, is particularly sensitive to this issue. Robert Williams was arrested in Detroit, Michigan on suspicion of stealing five watches by a facial recognition system. The system was unable to distinguish between black male faces and wrongly produced his ID when it crossed reference surveillance footage with driver license photos. When interrogated, officers brought a photo from the surveillance footage and put it against Robert Williams’ face. He responded “I hope you don’t think all Black Men look alike.” Upon realizing their mistake, he was released after having been held for over a day in a crowded holding cell.

Tackling the Challenge of a Biased AI through Diversity

The Machine Learning community must immeditely take steps to control and monitor racism in data sets and the internalized bias of tech teams. This leads to cleaner data and protects marginalized people against the threats of biased AI that can take away social and economic opportunities based on arbitrary indicators. The issues can be at least partially mitigated through an inclusive and comprehensive technical approach.

This starts with looking at our data and handling it with responsibility. You need to know your data, how it’s collected, annotated as well as where and how it was sourced.

- Where you sourced your data can massively impact results and perceptions of the dataset.

- Who is annotating your data? Ensuring the team doing data annotations is diverse and represents a full range of future users, can offset prejudices and improve data coverage.

- Building a diverse team at your office prevents oversights on inclusively. Teams can receive training on the risks of biased data and how to mitigate the effects of AI bias.

- If you plan on outsourcing computer vision, choose a company that works with diverse, global annotators from a wide variety of countries and cultures to prevent broader skews in the data.

- Once systems are launched, monitoring data in production is key to preventing discrimination and mishaps with data. Without actively monitoring, it can be overlooked by ML specialists on teams that aren’t inclusive or cognizant of these issues.

How Tasq.ai Solves the AI Bias Issues Through Diversity?

Tasq.ai developed the most comprehensive global distributed network of Tasqers that can be deployed immeditely to create high quality unbiased datasets for your Machine Learning initiatives. We empower the most advanced data science teams to outsource their data labeling jobs to a diverse global crowd thus eliminating the biases compared to local BPOs hired. The Tasq.ai platform also enables data scientists to divide tasks per country/language/culture as requested and to distribute the data load per request instantly without any hassle, thus encouraging the development of Unbiased AI systems.